During the past hundred years, academic philosophers have often tended to be people who do not seem like very high-achievement people. Part of the reason for their relatively low achievements has been their failure to seriously study what they should have studied.

The job of a philosopher is mainly to try to formulate or recommend an intelligent worldview. How do you do that? The best way is by studying reality and by studying observations, looking for things that are the most revealing in terms of pointing towards the true nature of reality. You might think of it this way: reality is a kind of gigantic puzzle, and the job of a philosopher is to study and analyze the most important clues that reality is providing. A good philosopher should be like a detective, looking for clues of the greatest importance. But while a detective may mainly study crime scenes and interrogate witnesses to find clues that help figure out who killed some suspect, the philosopher has the much bigger job of studying reality and the testimony of witnesses to find clues that shed light on the overall nature of reality.

Nowadays the person who studies to get a PhD in philosophy mainly spends his time studying the writings of other philosophers. That makes no sense. People hoping to spend their lives as philosophers should mainly be doing studies relevant to figuring out the nature of reality.

Below are topics that anyone should be studying in great depth before claiming that he properly prepared himself to be advancing a philosophical worldview.

Omitted field of study #1: Anomalous neuroscience cases

The nature of the brain is a key issue in philosophy. A key philosophical issue is: do brains adequately explain minds? That question cannot be properly addressed until you have very thoroughly studied brains and human mental phenomena in all their variety.

There is no proper study of the brain without studying anomalous cases in neuroscience. These include very many cases in which human intelligence and memory is only slightly weakened by large damage to the brain. A thorough study of such cases would include all the cases mentioned in this post, this post and this post. The cases would include cases such as these: Physician John Lorber's cases of many people who had above-average intelligence despite having lost most of their brains due to hydrocephalus.

Many cases of people who experienced very little loss of mental ability or memory, even though half of their brains were removed in the operation known as hemispherectomy, to treat bad epileptic seizures.

Karl's Lashley's cases, in which animals trained to perform some task could perform the task well after various fractions of their brains were removed.

Cases of people (sometimes called savants) who displayed far-above-normal mental powers of some type or some extraordinary mental skill despite having some severe physical shortfall of the brain.

If it were to be required that every person who gets a philosophy PhD degree study such cases, then perhaps so many philosophers would not be so dogmatic in asserting that the human brain is the source of the human mind and the storage place of human memories, a dogma that is contradicted by many of these cases.

Omitted field of study #2: physical limitations of the body and the brain that seem to defy prevailing dogmas about brains, bodies and the origin of minds and bodies

It would be great if every person getting a PhD in philosophy

were required to take a course entitled "Limiting Factors in the Human Body." The course would be entirely devoted to discussing discoveries that have been made about physical limitations of the human body.

The students would learn the very important fact that in the human cortex signals only travel across chemical synapses with a probability of 50% or less. They would also learn about brain cells are constantly bombarded by very severe noise of many different types. They would learn about the extremely short lifetimes of the protein molecules that make up synapses (only about two weeks or less), and they would read papers such as this one, stating facts such as "the synaptic turnover rate is as high as 1% per day in the visual cortex." We cannot explain memories persisting for decades by claiming they are stored in synapses being replaced at a rate such as 100% every four months. Quoting an even higher rate of synaptic turnover, the paper here states, "A recent imaging study revealed that the synaptic turnover rate in hippocampal CA1 cells is very high, with an estimated lifetime of 1–2 weeks (Attardo et al., 2015)."

The philosophy students would learn about the constant random restructuring that occurs in tiny brain units such as synapses and dendritic spines. The students would also learn about the physical limitations of DNA, and about how the only code it contains is one allowing it to only represent very low-level chemical information such as which amino acids make up a protein. The course would discuss how DNA molecules contain nothing like information specifying anatomy, and how DNA molecules fail to even specify the structure of cells or the organelles that make up cells. Such limitations are of the greatest philosophical relevance. They point to the great philosophical truth that we understand neither the physical origin of any human body nor the origin of any human mind.

Omitted field of study #3: The universe's fine-tuned laws and fundamental constants

A typical philosophy professor today is a person who admits that our planet is filled with organisms that have features that look like products of design; but he claims that this appearance is all a big illusion. Similarly, if a man stands on Liberty Island in New York Harbor, and watches what seems to be a giant leisure cruise ship pass by, he may claim that the ship is not at all what it appears to be. “Those swimming pools and sunbathers in bikinis are just to fool us,” the person may say, and may tell us, “The ship isn't really a leisure cruise ship – it's a military ship designed for amphibious attack.”

We should not be extremely impressed by the opinion of anyone dismissing as an illusion some huge case of apparent design in nature unless that person has studied all the cases of apparent design in nature. Fine-tuned organisms are only one such case. An equally weighty matter is the fact that the physical laws of the universe and the fundamental constants of the universe seem extremely fine-tuned to allow the existence of life. An adequate study of such things is a very complicated manner requiring at least 3 semester hours of study in a university course or equivalent independent study.

The need for the study of such things may be made clear by the following hypothetical conversation between Mary and an evolutionary biologist named John:

Mary: The biology of organisms is so fine-tuned. It must not be the result of chance, but of purpose.

John: No, take it from me, that's all just an illusion. It's all just chance.

Mary: But what about the universe's fundamental constants and laws of nature? They seem as fine-tuned as the organisms on our planet. And no one has any theory as to how that could have happened by chance.

John: Well, I don't know anything about THAT. But take it from me, the apparent fine-tuning in biology is just chance, not purpose or design.

In this conversation, John seems like a poorly-prepared thinker who has made a conclusion based on less than half a consideration of the evidence. Given three gigantic cases of what looks like fine-tuning in nature (the universe's fundamental constants, the universe's laws, and the universe's biology), John seems to have drawn a conclusion based on only one of these things, which doesn't seem like anything resembling intellectual thoroughness.

Omitted field of study #4: paranormal phenomena

There is a huge amount of evidence that paranormal phenomena exist, and that humans can have experiences that cannot be explained through conventional theories of biology. If paranormal phenomena exist, they are of the highest relevance to the claims of philosophers.

For example, let us consider the claims of typical philosophy professors. Such persons typically claim that all human abilities have an evolutionary explanation. Even without considering paranormal phenomena, this claim has seemed utterly implausible to many. One problem is that humans seem to have many mental characteristics and capabilities that cannot at all be explained as being the result of natural selection: things like philosophical reasoning ability, scientific reasoning, advanced mathematical reasoning, artistic creativity, spirituality, and altruism. Such things would not have increased a human's likelihood of surviving in the wild, and cannot be explained as the product of natural selection. This was admitted by the co-founder of the theory of natural selection, Alfred Russel Wallace, who wrote a long essay explaining carefully why natural selection cannot explain the more advanced human mental capabilities.

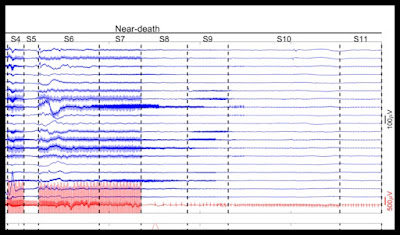

In the case of paranormal phenomena, we have another group of things that cannot be explained by natural selection or any evolutionary theory. For example, if you have some soul that goes out of your body during a near-death experience, or that may be seen by another as an apparition sighting, then humans have some great aspect that cannot at all explained by evolutionary concepts. And if humans have extrasensory perception or remote viewing capabilities, that's some other big human reality that cannot be explained by natural selection. Also, if such things exist, they cast very great doubt on all claims by neuroscientists that the human mind is merely created by the brain (or an aspect of the brain).

So philosophers should be studying evidence for paranormal phenomena, because such evidence seems to indicate that biology dogmas are wrong. Just as a physicist arguing for some theory (such as dark matter) should be making a thorough examination of all evidence that seems to contradict that theory (along with the evidence seeming to favor it), a philosopher should be making a thorough examination of all evidence seeming to contradict any theory he advances about the origin of humans or the nature of the mind (along with any evidence seeming to support such a theory). A philosopher

who only looks at evidence for his theory (and not evidence against it) is only doing half of his job.

Omitted field of study #5: engineering

Darwinism can accurately be described as an engineering opinion. Darwinism is the opinion that biological engineering effects more complex and intricate than any produced by human engineers can be produced by purely mindless natural processes. Those engineering effects are things like the human reproductive system, the human vision system, each of the cell types in our body, and so forth. But there is the problem that the people who advance the engineering opinion known as Darwinism are almost all people who know nothing about engineering, for they've never done any actual engineering. Remarkably we are sometimes asked to base our entire world view on the engineering opinion of philosophy professors who can be correctly described as "engineering know-nothings," because they lack any knowledge or experience with engineering.

A general course in engineering should be mandatory for anyone getting a PhD in philosophy. Such a course could include a thorough study of software engineering. Students would learn about how engineering products are never produced by the process of accumulation that evolutionary biologists claim causes magnificent biological engineering effects. They would learn that great examples of engineering such as bridges and complex computer programs result not from some “piling up” process of accumulation, but from the extremely coordinated efforts of teams of intelligent workers and designers directed to achieve particular engineering ends. They would learn that while software engineers have played around with evolutionary algorithms for decades, such algorithms are not significantly used in the production of commercial software, because they are not effective in producing impressive engineering results.

Omitted field of study #6: computer information systems

Philosophers often maintain that the brain is like a computer in your head. A philosopher may maintain that your brain retrieves memories kind of like the way a computer retrieves data stored on it. The people who make such claims usually know very little about the internal workings of computers, the intellectual limits of computers, and how computers store and retrieve information.

Before someone gets a PhD in philosophy, he should be required to pass a course explaining the details of computer information systems, with an emphasis on exactly how they store and retrieve information and exactly how they perform computing tasks. In such a course the PhD student would learn facts such as these:

There is no computer that has any real understanding of anything, and computers have no actual mental experiences such as humans have.

Computers are able to store information reliably because they have physical components such as hard drives and zip drives where information can exist in a stable unchanging state for years. In this sense, they are entirely different from what we find in human synapses, where the average lifetime of protein molecules is only a few weeks or less, meaning synapses have none of the stability that computer information storage components have.

Computers are able to transmit information very reliably by using very low-noise electrical circuits completely different from the very high-noise neurons and synapses in the brain, which seem to be incapable of transmitting information very reliably.

Computers are able to retrieve information very quickly because they use a variety of things (such as indexing and a storage positioning system) unlike anything that exists in the human brain. Your computer knows how to retrieve something from some very exact coordinate on a hard drive, but the brain has no coordinate system allowing retrieval from very exact positions.

A computer is able to retrieve information quickly and write information quickly because it has exact hardware for doing both of those things (what is called a read-write head), and that component is unlike anything that exists in the brain, where no one has found either a mechanism for writing memories or a mechanism for reading them.

A computer is able to store information because it makes use of sophisticated encoding protocols carefully worked out by designers, things such as the ASCII code, and the algorithm for converting ASCII to binary. No such encoding protocols have ever been discovered in the brain, with the exception of the genetic code that can only represent amino acids rather than learned memories.

A computer is able to perform tasks such as information storage and retrieval because it has a whole very elaborate software infrastructure (such as an operating system) unlike anything known to exist in the human brain or in DNA.

After studying all the very many things that computers have that brains do not have, our philosophers might begin to see some powerful reasons for rejecting the claims that the human brain stores memories and that the human brain is the cause of human thinking (30 of which are listed here).

Omitted field of study #7: cosmology

Our philosophers would probably not benefit from studying cosmology by reading the typical triumphal accounts of the topic. But they might benefit from reading critical accounts of cosmology that tell us about how cosmology is a field of study dominated by scientists and science journalists describing as facts things for which there is no direct observational evidence: things such as dark matter, dark energy and primordial "cosmic inflation" (not to be confused with the Big Bang). A good starting point might be this recent article and the much older papers here ("Sociology of Modern Cosmology" by Martin Lopez-Corredoira) and here ("Non-standard Models and the Sociology of Cosmology" by the same author). After studying how a major branch of science can become dominated by unproven dogmas and very great overconfidence, our philosopher might become more likely to consider whether the same thing has happened in other branches of science.

Omitted field of study #8: undisputed cases of anomalously exceptional human mind performance

There are many cases of exceptional human mind performance that are not referred to as paranormal, but which involve the most astonishing display of wondrous human abilities. A study of such cases should be made by every person studying to be a philosophy professor.

Below are some examples:

- Steven Wiltshire has repeatedly shown the ability to accurately draw an entire skyline after seeing it only one time.

- Mathematician and computer scientist Herman Goldstine wrote this about the legendary mathematician John von Neumann: "One of his remarkable abilities was his power of absolute recall. As far as I could tell, von Neumann was able on once reading a book or article to quote it back verbatim; moreover, he could do it years later without hesitation."

- According to an article in the LA Times, Kim Peek could recall the contents of 12,000 books he had read, even though his brain was severely damaged, and he lacked most or all of the corpus callosum fibers that connect the two hemispheres of the brain.

- According to one book, "John Fuller, a land agent, of the county of Norfolk, could correctly write out a sermon or lecture after hearing it once; and one, Robert Dillon, could, in the morning, repeat six columns of a newspaper which he had read the preceding evening. More wonderful still was George Watson, who... could tell the date of every day since his childhood and how he had occupied himself on that day."

- The mathematician Leonhard Euler could recite the entire Aeneid from beginning to end, a work of 9896 lines.

- Mezzofanti could speak very well thirty different languages.

- A four-year-old girl demonstrated on TV her ability to speak seven different languages.

- Numerous Muslim scholars have memorized all 6000+ lines of their holy book, and some did this as early as age 10.

- According to a book, "The great thinker, Pascal, is said never to have forgotten anything he had ever known or read, and the same is told of Hugo, Grotius, Liebnitz, and Euler. All knew the whole of Virgil's 'Aeneid' by heart."

- The famous conductor Toscanini was able to keep conducting very successfully despite bad eyesight, because he had memorized the musical scores of a very large number of symphonies and operas.

- According to a book, a waiter in San Francisco could recall exactly what any customer had previously ordered, even if the customer had not visited the restaurant in years.

- The artist Franco Magnani (famed as "the Memory Artist") was able to draw "photographically accurate" drawings of his hometown that he had not seen in more than 30 years.

- G. C. Leland says: " It is recorded of a Slavonian Oriental Sect called the Bogomiles, which spread over Europe during the middle ages, that its members were required to memorize the Bible verbatim. Their latest historian, Dragomanoff, declares that there were none of them who did not memorize the New Testament at least; one of their bishops publicly proclaimed that, in his own diocese of four thousand communicants, there was not one unable to repeat the entire scriptures without an error."

- Akira Haraguchi was able to recite correctly from memory 100,000 digits of pi in 16 hours, in a filmed public exhibition.

- The fascinating 47-minute video here "The Boy Who Can't Forget" documents cases of Highly Superior Autobiographical Memory (HSAM), also called hyperthymesia. According to the article here a scientist named McGaugh "is adamant that the super memory demonstrated by the small number of people he and others have identified represents a genuine phenomenon." People with such a Highly Superior Autobiographical Memory (including Jill Price and Aureilien Hayman) can recall what happened to them every day in the past ten years.

- A book tells us this: "The geographer Maretus, narrates an instance of memory probably unequalled. He actually witnessed the feat, and had it attested by four Venetian nobles. He met in Padua, a young Corsican who had so powerful a memory that he could repeat as many as 36,000 words read over to him only once. Maretus, desiring to test this extraordinary youth, in the presence of his friends, read over to him an almost interminable list of words strung together anyhow in every language, and some mere gibberish. The audience was exhausted before the list, which had been written down for the sake of accuracy, and at the end of it the young Corsican smilingly began and repeated the entire list without a break and without a mistake. Then to show his remarkable power, he went over it backward, then every alternate word, first and fifth, and so on until his hearers were thoroughly exhausted, and had no hesitation in certifying that the memory of this individual was without a rival in the world, ancient or modern."

- Encyclopedia.com refers to the "miraculous photographic memory" of Thomas Babington Macaulay.

- Wikipedia.org states this about Daniel Tammet: "One of his most notable achievements was being able to recite Pi to 22,514 decimal places, taking him over five hours."

- According to an article on bbc.com, "Ask Nima Veiseh what he was doing for any day in the past 15 years, however, and he will give you the minutiae of the weather, what he was wearing, or even what side of the train he was sitting on his journey to work."

- Derek Paravicini was born 25 weeks early, with severe brain damage, but he has reliably demonstrated countless times the ability to very accurately play back on a keyboard any song that is played to him, note for note, even if he has never heard the song before.

- A nineteenth century work describes a similar ability in a prodigy known as Blind Tom: "The doctor then called for some one of the audience to come and play a piece of music for the first time in Tom's hearing, promising a very faithful imitation ; Miss Jones was persuaded to play a piece of her own composition, and hence unknown to Tom and the audience....When the lady was through and escorted from the stage, Tom sat down and played it through perfectly. " The next page states, "Tom executes some of the most difficult pieces of Beethoven, Mendelssohn, Bach, Gottschalk, Thalberg and others, and these he learnt by hearing them played."

Omitted field of study #9: sociology and social psychology

A belief community is any tight-knit community that shares common beliefs that involve assuming the truth of things that are not simple observational facts. Belief communities do not exist solely in religion. There are also political belief communities and academia belief communities.

Sociologists and social psychologists study the common characteristics of belief communities. Typical characteristics of a belief community include the following:

(1) A tendency to adhere to some core belief system commonly professed by members of the belief community.

(2) A tendency to idolize or lionize one or more revered authority figures, who tend to be "put on pedestals."

(3) A tendency to denigrate or slight certain groups of people who are outside of the belief community, particularly those who advance opposing belief systems.

(4) A tendency to encourage a particular set of norms, taboos, legends, behavior patterns and speech customs that tend to be followed by members of the belief community.

(5) A hierarchical system of authority with multiple tiers of authority, examples being the many-tiered authority system in the Catholic Church and the very similar many-tiered authority system in academia.

The typical philosophy professor is very much a member of a belief community. The dogma that the fantastically complex hierarchical organization of biological systems all arose by chance biological processes is very much an unproven article of faith, a dogma taught by a belief community such a person belongs to. The dogma that the wonders of the human mind (such as instant memory recall and the good preservation of fifty-year-old memories) are all merely produced by the brain is very much an

unproven article of faith, a dogma taught by a belief community such a person belongs to (and also a dogma inconsistent with many low-level facts discovered by neuroscientists). But sadly our philosophy professors typically fail to perceive that they are members of a belief community. They tend to think that everything they believe is "biological science," just as the members of the Marxist belief community believed that all of their beliefs were "economic science" or "historical fact," and just as fundamentalists often think that all of the miracle narratives in their scriptures are "recorded history." Were our philosophers to make a good thorough study of sociology and social psychology, they might gain some insight as to the social dynamics and cultural conformity factors that help to explain their speech customs, and they might be less dogmatic in pushing unproven claims.

Omitted field of study #10: biological complexity and biological organization

A human being is a biological organism. To teach intelligent philosophical opinions about human beings, a philosopher needs to understand what a human being is physically. A deep study of biological complexity and biological organization should be mandatory for anyone claiming to have properly prepared to be a philosopher. But today the average philosophy professor seems to have no training in biology.

So we have many philosophy professors making silly, stupid claims such as the claim that a human body is merely a "bundle of atoms," a claim that would not tend to be made by any adequate scholar of the vast amount of physical organization in human bodies. And we have many other philosophy professors parroting unfounded claims of biologists (such as myths about DNA body blueprints), myths that they would not be repeating if such philosophy professors had deeply studied biology in the type of critical, questioning manner that a philosopher should study things.

Oops, the professor made a big mistake

Other mistakes of academic philosophers

One of the biggest mistake of contemporary philosophers has been the gigantic mistake of being intimidated by scientists in the institutions where they teach. It's rather easy to understand why this might have occurred. The typical university lavishes spending on science departments, leaving philosophy departments with relatively little money. So maybe at a university you might have a situation like the one below:

Given a situation like this at many universities and colleges, you can perhaps understand why contemporary professors of philosophers have tended to act as meek lapdogs or credulous parrots, meekly repeating many an unfounded claim of scientists. But that is not the proper role of a philosopher. A philosopher should be fearless about challenging authority in all places, always ready to speak truth to power.

A model of how a philosopher should act was given to us thousands of years ago in the dialogues of Plato. In endless places in such dialogues we have a case where some overconfident person claims to understand some weighty complex matter he does not really understand. In the dialogues of Plato, the character of Socrates is constantly acting as a brake against such overconfidence. Constantly asking the right questions and making the right challenges, Socrates again and again leads the overconfident person to realize that he knows much less than he thinks, and that the issue is much more complex than he originally thought.

Contemporary philosophers have failed to use the dialogues of Plato as inspiration. The world is filled with overconfident scientists pretending to know things they do not actually know. But I cannot ever recall reading a long interview of a scientist by an interrogative philosopher, in which the philosopher exposed the scientist's unwarranted claims and overconfidence. It is rather as if scientists have hypnotized philosophers into an unquestioning submission, kind of like in the schematic visual below.

To the philosophers who got so spellbound, I say: awake from the trance, and start acting in the questioning, critical, dogma-challenging way that philosophers should act.